The risk in having too many risks…

Confusing failure modes for risks is one of the most common structural mistakes in medical device risk analysis — and one of the most costly to fix later. This article explains the difference between hazards, hazardous situations, and harm under ISO 14971, why a bloated risk analysis undermines your whole risk management process, and how one simple syntax rule can help you build a cleaner, more actionable document from the start.

Do you have more than 40 risks in your device risk analysis — and it's not even invasive?

Most likely, they are not risks. They are failure modes. And confusing the two is one of the most common — and costly — mistakes I see in early-stage medtech.

A risk list that has grown out of control creates real problems:

It dilutes focus away from the risks that actually matter — the ones you should be able to recite off the top of your head. It opens the door to inconsistencies and duplication in a document so large that no colleague will review it in detail, but that an auditor will flag immediately. It turns every product feature into a hazard or risk control, which then warrants stricter testing requirements down the line. And it makes traceability in post-market surveillance and clinical evaluation a genuine operational nightmare.

I've seen many well-meaning startups suffer through the consequences of a badly designed risk analysis. The QARA who built it might feel proud of its thoroughness. But the rest of the team loses interest and never truly owns their risk areas. Management stops using it for decision-making. Product design becomes cluttered with risk controls — warnings, untouchable features — that nobody can explain.

This kills the collaborative and iterative spirit that is essential for good risk management.

So what's the difference between a risk and a failure mode?

A risk analysis table is built from three distinct layers, as described in ISO 14971 and ISO 24971:

Hazard categories — the nature of the potential harm (energy, software, misuse — full list in ISO 24971)

Hazardous situations — the circumstances in which people are exposed to a hazard, including failure modes and external causes

Harm — the actual injury or damage to health that may result

The most common mistake is conflating hazards with hazardous situations — that is, treating failure modes as if they were risks in their own right. The terminology doesn't help, admittedly.

One simple strategy to keep your risk analysis clean

Use a fixed syntax to write your risks consistently. Here's one I find practical:

THERE IS A RISK OF [who] [hazard type faced] ORIGINATING FROM [list of failures and hazardous situations] WHICH MAY LEAD TO [harm type — pick only the highest level]

Two examples:

For a hardware device: There is a risk of the patient coming into contact with high voltage (electrical energy), originating from a) damage to the connecting cable, b) manufacturing defect, c) poorly designed insulation — which may lead to electric shock.

For a SaMD: There is a risk of the physician receiving inaccurate output from the device (incorrect medical decision), originating from a) algorithm design limitations, b) algorithm execution error, c) user interface failure, d) cybersecurity attack, e) unclear instructions for use — which may lead to delay in treatment.

Notice how multiple failure modes collapse into a single, well-defined risk. That's the point. Your risk analysis becomes shorter, more focused, and far easier to maintain over time.

If you're building the table manually, write the syntax in your header row. If you're using AI-assisted tools, enter it as a prompt constraint or use it to validate the output. If you're reviewing an existing table, run each row against it.

A risk analysis should be accessible to the whole team, actionable in decision-making, and sustainable as the product evolves. Getting the structure right from the start is one of the highest-leverage things a QARA can do in an early-stage company.

Deep Dive: Getting the Structure Right

Risk Analysis vs FMEA

Both are part of the Risk Management process under ISO 14971, but they serve different purposes and are not interchangeable.

Risk Analysis is mandatory. It is the top-level document that captures your device's safety profile — the full picture of what could go wrong, for whom, and with what consequences. Think of it as the billboard for your device's safety. It needs to tell a meaningful story, not overwhelm the reader with noise.

FMEA (Failure Mode and Effects Analysis) is a supporting analytical method — good practice, and often expected by auditors, but not explicitly required by ISO 14971 as a named technique. It is the drill-down tool: you take each component, subsystem, or process and ask systematically, how could this fail, and what would the effect be?

The same FMEA logic appears under different names depending on the domain:

In SaMD, it is often formalised as a Software Hazard Analysis (required under IEC 62304 as part of software risk management)

In usability engineering, it underpins the Use-Related Risk Analysis (URRA), which traces use errors and abnormal use to potential harm — a core deliverable under IEC 62366-1

In cybersecurity, it is effectively a vulnerability analysis or threat modelling exercise (with reference to MDCG 2019-16 and IMDRF guidance on cybersecurity)

Each of these domain-specific analyses follows the same logic: identify how something could fail, then trace that failure to a potential harm. The outputs of all of them feed into one Risk Analysis for your product — not multiple separate risk documents.

This is where the structural confusion often starts. Teams run an FMEA, a URRA, and a software hazard analysis, and then copy the failure modes directly into the Risk Analysis table. The result is a document that mixes hazards, hazardous situations, and failure modes in the same column, under the label "risk." Multiply that across a product with many subsystems, and you quickly reach 60, 80, or 100+ rows — most of which are not risks at all.

The three-layer structure

ISO 14971 and its companion standard ISO 24971 are clear on the terminology, even if teams frequently blur the distinctions in practice:

Hazard: a potential source of harm — an inherent property of the device or its environment (e.g. electrical energy, ionising radiation, software decision output)

Hazardous situation: the circumstance in which a person is exposed to a hazard — this is where failure modes, use errors, and external conditions live

Harm: the physical injury or damage to health or property that results

A well-structured risk analysis row moves through all three layers. The failure modes — however many there are — belong in the hazardous situation column, not in a row of their own. That single structural choice is what keeps the document manageable.

A note on harm classification

For the harm column, the IMDRF Adverse Event Terminology provides a standardised, hierarchical coding system that is increasingly expected in technical documentation and is directly useful in post-market surveillance reporting. Using it consistently from the start — rather than free-text descriptions — saves significant effort later when feeding into your PMSR or PSUR.

Practical checklist

Can every row in your table be read using the [who / hazard type / originating from / harm] syntax? If not, it may be a failure mode, not a risk.

Are failure modes consolidated under their parent risk, rather than listed as standalone rows?

Is the harm column using consistent, ideally IMDRF-aligned terminology?

Could a new team member read the Risk Analysis and understand the device's core safety story in under 30 minutes?

References

ISO 14971:2019 and ISO 24971:2022 — available at a significantly lower cost than ISO directly via the Estonian Standards store (legitimate national standards body, same official text)

IMDRF Adverse Event Terminology browser — for standardised harm classification

IEC 62304 (software lifecycle) and IEC 62366-1 (usability engineering) — for domain-specific hazard analysis requirements that feed into the Risk Analysis

Methodology note: This article is based on two original LinkedIn posts (first, second) written by me, reflecting my professional experience and personal perspectives on risk management in medical device development. Claude AI assisted in combining and expanding the posts into a broader article for this blog, integrating background context, regulatory references, and a structured Deep Dive section. All regulatory perspectives and practical recommendations are my own, and all content has been reviewed by me for accuracy.

Crans-Montana, a compliance perspective

In the wake of the devastating NYE fire in Crans-Montana, this post reflects on the critical role of compliance and individual accountability in preventing national tragedies, reminding us that regulation is only a burden until the moment it becomes our last line of defense.

Regulation is often seen as pain in the neck… until it isn't. A national tragedy takes place in Switzerland on NYE, and we ask ourselves why didn’t this underground bar have compliant emergency exits? Why wasn’t it inspected in more than 5 years? How could a combustible soundproofing material be permitted and line the whole ceiling? How could staff pull off such a deadly stunt (regularly!) with zero awareness about fire risk? Why the heck were the victims-to-be filming instead of fleeing??

And in particular, how could ALL these hazards manifest simultaneously??

I am horrified by the incident in Crans-Montana (news article). It should never have been. It lights up the painful memory of the Grenfell tower fire in 2017, which had shocked me deeply as I was living in London back then.

We all assume and expect to be protected by regulation. We all assume and expect compliant and responsible behaviour of others. The reality is that if things go south, we are on our own to face the consequences. We all have a responsibility to do our bit, whether it’s fire safety or health.

Being alert to risks, and raising the awareness of others too. Informing yourself and doing your best at least, not ignoring. Holding others accountable by asking questions or reporting unsafe practices. Raising your voice to policy-makers if something isn't enough.

I hope my work does a bit on all these things, within the realm of healthtech, of course, not fire regulation.

As a result, Switzerland now banned the use of pyrotechnics in indoor spaces and is investigating not only the bar owners but the municipality, that did not inspect the bar ONCE in 5 years. The sale of any flammable soundproofing materials is also under scrutiny.

Could this bring into 2026 a bigger wave of respect for regulation and compliance? Am I hopelessly wishful?

Today in Switzerland is a national day of mourning for the 40 victims, mostly teenagers. It breaks my heart to think of what’s left of the 116 injured.

I pray for them and for something like this to not be allowed to happen again - by regulators, by business owners, by fellow citizens, by luck (that's a factor too..🍀), by us all doing our little responsible part in society.

(Image rights: https://www.bbc.com/news/articles/c9dvyyjyj18o)

What can we learn from… a progressive Notified Body?

Medtech governance in Europe is highly decentralised, with product certifications also being "outsourced" to private entities (i.e. Notified Bodies). This would be complicated enough if classic Notified Bodies didn't also bring their own enormous challenges to the table: lack of availability, lack of new tech competence, lack of transparency and communication.. Companies feel they have no control over their destiny.

So what's Scarlet doing differently as a Notified Body:

1️⃣ Focus on one subject matter (digital devices only) to ensure top and uptodate competence

2️⃣ Fit the conformity assessment process around the applicant and their timelines

3️⃣ Engage transparently and pragmatically about expectations in pre-sub Structured Dialogues

4️⃣ Scale resources flexibly with externals

and, my favourite,

5️⃣ Train their trusted consultants in an independent manner in order to increase the chance of high quality submissions and enable more effective reviews.

Which other NBs do this? None that I'm aware. But please share if you know any good practices you've experienced.

Therefore, I'm particularly enthusiastic to have been part of this special training session last Friday! Not only with a like-minded NB, but among a group of 18 like-minded regulatory experts ❤️

New times and new tech need a new approach - a mantra of Edge Compliance. I hope other and new NBs will take example.

Note: I'm not affiliated but believe the initiative deserves genuine praise and broadcasting.

Thank you Dan Levy and Sandy Wright at Scarlet - also for the photo credit. Stellar job!

LLM for Quality tasks

A short story on using AI for a QARA task and coming up with a framework for doing it faster (4h down to 1h) while keeping it under control.

Task at hand:

Client received its inspection report from the authority via the post in the national language and needed it digitalised and in English in order to action it.

1️⃣ Convert scanned pdf to electronic document

ChatGPT 👎 didn’t identify text in the scanned pdf.

Gemini and NotebookLM did it, but I was unconvinced by the accuracy 🧐 .

GoogleDrive did the job, uploaded the pdf and "Open as GoogleDoc". ✅

2️⃣ Translate electronic document

ChatGPT and Gemini kept hallucinating badly 😵💫 .

The "Translate document" function of GoogleDocs returned a poor literal translation 🥴 .

NotebookLM was accurate but skipped content 😥 .

Ended up doing section by section via Gemini's in-text "AI Refine" function with a very meticulous prompt and checking it manually in a side-by-side table 🥵 .

3️⃣ Format electronic document similar to the original

ChatGPT and NotebookLM didn’t work 🤕 .

Gemini could do some basic improvements via the in-text "AI Refine" function, but not via the GoogleDocs built-in "Ask Gemini" nor via the browser chat. Interesting how much these differ in capability.

In the end, the formatting fix was mostly manual 🤯 .

Conclusion:

After 4 miserable hours spent on the task with many failed attempts and much too manual input, I achieved a satisfactory document.

But, I still wanted to get to the bottom of this. There must be a better way??

So I restarted from scratch using a different approach, which I could summarise in a way that is inspired by the concept of the PDCA / Agile cycle we use in Quality:

⤵️ Plan: Ask AI for the right tools and prompts to achieve your goal. And importantly, "ask AI to ask you" questions or point out what is unclear in order to help you refine your requirements accurately.

▶️ Do: Approach it step by step. Run your refined prompt for your SUBtask in your selected tool. Quick review of the output, refine the prompt. Change tool if needed.

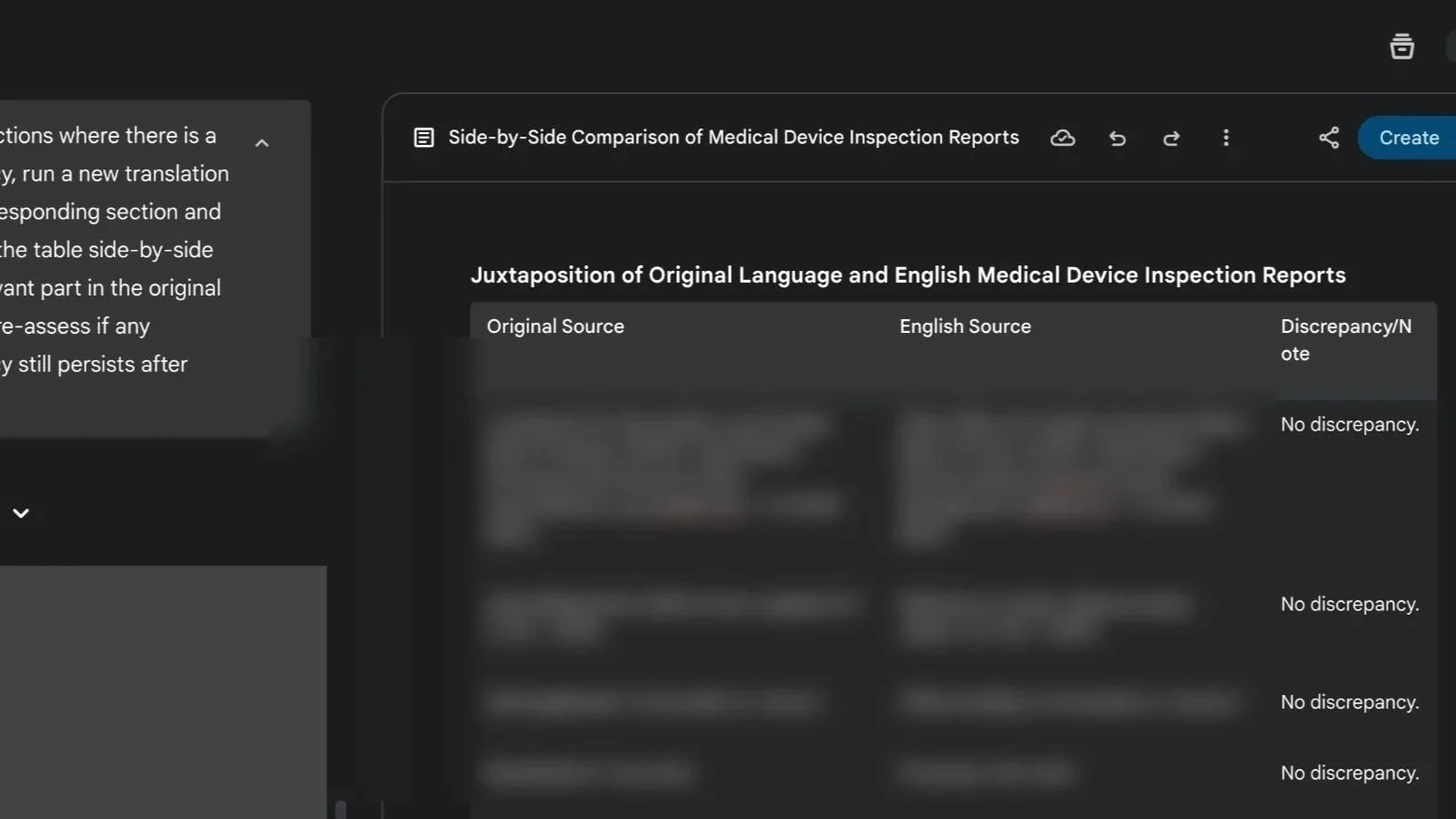

⏯️ Check: Get AI to verify its results and to help you check it manually by highlighting any discrepancies. For example, “juxtapose the original and translated content in a table section by section and note any discrepancies between the two version of the text”.

🔁 Act: Tell AI to correct the discrepancies, then re-run the verification step to update results.

Eventually, by doing it this way, I could achieve the same result in 1h and with increased confidence on the accuracy. Still not extremely fast, but considerably faster!

I am curious, how would others have approached this dull task?

If “wellness” cosmetics are regulated, why isn't “wellness" tech?

While diving into a new cosmetics project, I saw this angle, then tilted my head and... "I couldn’t help but wonder": do people realise cosmetics carry real compliance duties despite no medical claims?

Cosmetics must show, at mininum:

▶️ Manufacturing quality: GMP (ISO 22716) + national rules (e.g., EU 1223/2009, FDA 21 CFR 700)

▶️ Safety & testing: microbial load, stability/shelf life, toxicological assessment

▶️ Accountability & traceability: labelling, INCIs disclosure, product registration (e.g., EU CPNP), adverse event reporting

▶️ Governance: a designated Responsible Person, inspection-ready procedures & technical documentation

In principle, not at all far from medical devices, just rightly lighter in scope and depth.

I’m seeing both directions lately: wellness products drifting into medical territory and claim downgrades to step out of it (especially post-MDR transition end). As medical regulations tighten, new categories - and opportunities - emerge at the edges. The fluid interface is such an exciting place to be ❤️🔥.

My view:

Health/body-affecting products should meet proportionate standardisation and accountability. I’d favour a distinct “health and wellness-tech” category with its own rules (as cosmetics have, as the FDA is exploring) over forcing medical device frameworks around them.

Do you agree? Do you also see a rise in review of claim strategy by health product manufacturers (whether upwards or downwards)?

Quality whistleblower - hero vs martyr

How do you make yourself heard when you MUST raise the redflag over design quality, production compliance, clinical safety?

It's an incredibly difficult position to be in, whether you're acting from inside a company or as an external reviewer, stakes are high and office politics (if not even higher politics), budget concerns, along with own self-limiting beliefs, come into play giving you many reasons why you shouldn't follow your gut. Maybe I'm wrong, maybe it's all well. Or maybe it isn't?

I've been in this position before a couple of times as PRRC. It's dire, sleepless nights, conflict escalation. Escalate it to whom? If the technicians or QA's voice is not heard, and your voice as PRRC is not heard, then you hope external parties such as lawyers, consultants, CROs, reviewers will be more effective gate keepers, but then they aren't. They may overlook things or also have their own interests at play. Then who is left to protect the patient? Who is going to stand up and stop the chain of events before it's too late?

The story of Frances Oldham Kelsey, FDA medical reviewer in the 60s who refused to approve Thalidomide is a great example, and similarities can be seen in other preventable disasters such as Titan's OceanGate, Boeing's 737max MCAS software, or Chernobyl to name the most famous. All had a long chain of brave flag raisers in a culture that shut them down..

Culture is key and of utmost importance in medtech. Accountability, feedback and psychological safety create space for risks to be raised and taken seriously at any stage of a project. So called "Type 1 decisions" in business, i.e. non-reversable (launch or not launch?) need true raw information, not just the glossed version that the manager is willing to lend an ear to.

A culture that integrates Quality as their biggest asset and strategic partner will value anyone who raises issues, mistakes, inefficiencies, with a view of preventing not only harm but also resources and reputational risks.

I'm so deeply passionate about driving such cultural shifts and help teams innovate in the most progressive, forward-looking and responsible ways.

What is “quality” really?

I am often asked: what is “quality”?

I find it funny how often this question comes up in my field and how much debate it sparks over and over again. I cannot think of many other professions that would routinely take you to re-discuss and reassess their own purpose and definition at such philosophical depths.

Each one of us has a different take on what “quality” or “good” means depending on what most matters to us or what is the object we are talking about. And that's what makes it so personal and ambiguous. Good quality furniture is sturdy and durable. A good quality smartphone should be fast and reliable. Yet good quality in the service industry may be tied to delivery and customer support.

What do all these have in common? Expectations and accountability.

As the end user you want to know that what you are getting - whatever that is - really meets your needs. You want to know that what you read about it is trustworthy and not only the result of creative marketing. You also want to know that if something goes wrong with it, the provider will have your back and will take responsibility for it.

So although “quality” may seem at first as a highly subjective attribute, it eventually boils down to something tangible and product-agnostic. Know your customers' needs, know what you're giving to them, act upon problems. This is effectively what quality standards are all about. A set of requirements for any industry (or in certain cases sector specific) that unifies what quality means for all and defines how to prove it unambiguously.

In the case of medical products quality is clearly paramount. The expectation for genuine health outcomes and for service accountability are closely tied to our own wellbeing, our safety, our privacy and security - or that of our loved ones. As much as we may try and inform ourselves to discern good quality products from bad quality products, there's a limit to what one individual's understanding can achieve. Products and companies alike can be incredibly diverse and complex, and to effectively scrutinise different therapeutic options one would need to be simultaneously an expert in medicine, science, technology, law, security, privacy, all in one. As consumers and as patients, this is an unfair burden. This is why the health industry is regulated and requirements are standardised. This is why there is a system and diverse teams of experts doing it on our behalf and in our interest, from pre-market approval to post-market surveillance. National health authorities safeguard users to ensure transparency and accountability.

As any complex system and human endeavour, the quality framework is not perfect, of course. Unfortunately, operating quality in a compliant way doesn't translate 100% in assurance of good practice or intentions, as some companies choose to treat it as a mere checkbox exercise. But even that, one could argue, is better than nothing. On the opposite end of the spectrum, companies with good practices can really struggle to align to the ever more complex standardised system. The complexity and resource investment can sometimes be overwhelming and off-putting for young startups, and this can hinder innovation and delivering value to users who need it.

To me, personally, quality means "good practice, consistently". Good as in responsible, safe, ethical, just, effective, efficient. Consistent as in habitual, auditable, reliable. My previous experience in science, process optimisation, sustainability and in Corporate Social Responsibility (CSR) have given me a broad perspective that for a long time I worried being too dispersive. Yet somehow it seems to have converged into this mission and passion for driving quality. The most satisfying feeling is to see a young startup through, from visualising its early quality ambition to reaching a mature governance structure in a value-aligned quality system. By supporting organisations understand what quality truly means to them and making it workable for them, we advance the value proposition of the whole sector. To me, it means making things better in this world, a step at a time.